tags:

In late November of 2022, an AI chatbot tool called ChatGPT became available online and immediately began making waves in both academic and private sectors. By February 2023, Chat GPT was pervasive in virtually all levels of education. Chat GPT is now one of many AI chatbots that are quickly and in many cases, quietly changing the landscape of both education and the rest of the world. ChatGPT is the most well-known and can be accessed here. At the moment access is free.

Chatbots have been around for years. Some other examples include Alexa, Siri, and the customer service bots many of us have experienced online. However, these new AI tools are far more powerful. Generative AI is a type of AI that can create new content by learning from existing data. To learn more about generative AI, check out the PPT presentation below as well as the info on this page.

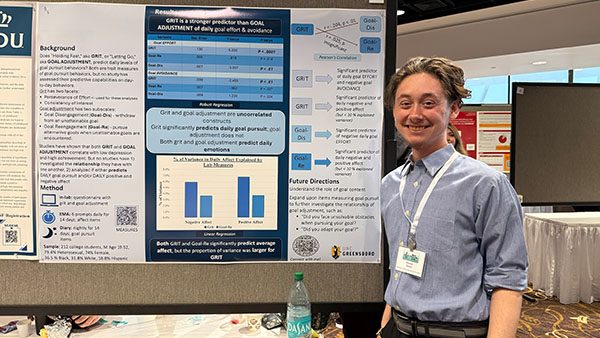

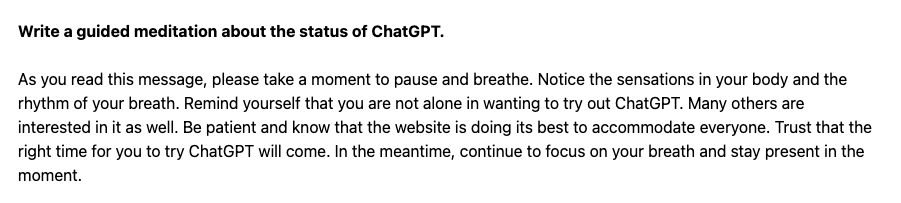

Like more basic chatbots, the newer AI tools can chat with users, summarize texts, write code, and solve math problems. However, they are far more powerful. For example, ChatGPT uses natural language to perform tasks such as revising writing samples and writing essays and poems. It can also serve as a debate partner. ChatGPT began to create waves in the academic world almost immediately because it earned passing scores on the evidence and torts portion of the bar exam. It has also been used to show it can pass a freshman year at Harvard with a GPA above 3.0. ChatGPT has generated academic papers good enough to be accepted by journals. Many academic journals are moving to ban the use of AI generators, but some researchers have already listed the chatbot as a co-author on articles. Chat GPT can also write creatively. Here is an example taken from the ChatGPT website of a meditation written by ChatGPT:

ChatGPT can challenge falsehoods. For example, when asked, “Tell me about when Christopher Columbus came to the U.S. in 2015,” ChatGPT responded “This question is a bit tricky because Christopher Columbus died in 1506, so he could not have come to the U.S. in 2015, but let’s pretend for a moment that he did! If Columbus arrived in the US in 2015 … he might be surprised to find out that many people don’t view him as a hero anymore; in fact, some people argue that he was a brutal conqueror who enslaved and killed native people.” (source)

OpenAI, the company behind ChatGPT, utilizes reinforcement learning. They hire human AI trainers to interact with the model and then these trainers compare responses given by ChatGPT to human replies and grade their quality to reinforce human-like conversation approaches. (source)

When asked, ChatGPT listed the top five things it can help the average person with:

- Answer a wide range of questions.

- Explain and clarify complex concepts.

- Assist in creative tasks such as brainstorming ideas.

- Offer language support and tutoring.

- Engage in interesting conversation and entertain by playing games.

An AI tool called Jasper AI provides writing templates such as:

- Documents: This is similar to Google Docs where you can write and edit your document with the help of Jasper’s writing assistant.

- Jasper Art: Generate images from text prompts

- Blog Post Outline: Helps you come up with ideas and outlines for your how-to and listicle posts.

- Content Improver: It rewrites content to make it better

- Content summarizer: Get the key bullet points from a piece of content

- Creative Story: Generate stories to engage readers

- Explain it to a Child: Rephrase text to make it easier to read and understand

- Email Subject Lines: Helps with writing compelling email subject lines

- Engaging questions: Create forms with questions to ask your audience

- FAQ Generator: Generate FAQs for your articles and blog posts

- Paragraph Generator: Generate well-written paragraphs

- Sentence Expander: Expand a short sentence of few words into multiple sentences

- Text Summarizer: Generate key ideas from a piece of text

- Poll Questions & Multiple Choice Answers: Helps with creating questions with multiple choice answers

- Tweet Machine: Generate engaging tweets.

- TikTok Video Captions: Generate captions for TikTok videos

- Video Script Outline: Helps with creating script outlines for YouTube videos

- Video Topic Ideas: Helps with brainstorming video topics for YouTube.

What can your students do with AI? A lot, and it will only increase.

According to Chat GPT:

Artificial Intelligence (AI) has the potential to bring about numerous benefits to society across various domains. Here are five ways AI can positively impact society:

- Healthcare Advancements: AI can assist in early disease detection, diagnosis, and treatment planning. Machine learning algorithms can analyze vast amounts of medical data, such as images and patient records, to identify patterns and make accurate predictions. This can lead to quicker and more precise diagnoses, personalized treatment plans, and improved patient outcomes. One AI tool can detect early stage Alzheimer’s through voice analysis, and AI-supported mammogram screening increases early detection by 20%.

- Enhanced Education: AI-powered educational tools can provide personalized learning experiences to students. Adaptive learning platforms can assess individual students’ strengths and weaknesses and tailor educational content accordingly. Virtual tutors and intelligent chatbots can offer real-time help and guidance, improving access to education and supporting both students and educators.

- Environmental Conservation: AI can be used to monitor and manage environmental resources more effectively. For instance, AI-equipped sensors and drones can help track changes in ecosystems, predict natural disasters, and manage resources like water and energy more efficiently. This technology can contribute to sustainable practices and help mitigate the effects of climate change.

- Efficient Transportation: AI-powered systems can optimize traffic flow, manage public transportation networks, and even facilitate the development of autonomous vehicles. These technologies can lead to reduced congestion, shorter commute times, and increased safety on the roads. Additionally, AI can contribute to route planning, reducing fuel consumption and greenhouse gas emissions.

- Improved Accessibility and Inclusion: AI can enhance accessibility for individuals with disabilities. Speech recognition, natural language processing, and computer vision technologies can assist those with hearing, speech, or visual impairments by enabling them to interact with digital devices and services more easily. AI can also help bridge language barriers, making information and communication more inclusive.

These are just a few examples of how AI can positively impact society. As AI technology continues to advance, its potential benefits will likely extend to even more areas, driving innovation and positive change across various sectors.

Some more specific examples:

In Teaching & Learning:

- Tutoring: ChatGPT does a good job of defining and explaining concepts in an understandable way to all learning levels.

- Organization, analysis, and management of code and data.

- Creation of content (when not being used in a way that violates academic integrity and/or copyright).

- Grammar and proofreading, especially when English isn’t the student’s primary language.

For those of us who grew up in the 80s, it’s like watching our sci-fi movies become reality, and not necessarily in a good way. Here are some concerns about text-based AI:

- ChatGPT may provide plausible-sounding but inaccurate responses. The bot does not provide citations so it can be difficult to evaluate the responses.

- Limited training data: needs more work to eliminate bias.

- ChatGPT is sensitive to slight tweaks in the phrasing of a question or request.

- Although trained to refuse inappropriate requests and detect false statements, the technology is not always accurate, in part because it may not be up to date on the most recent news/developments.

- The product is expensive to maintain so may not remain free. This would increase the digital divide by providing such a powerful tool only to those who can afford it. This is also why banning access to AI tools on campus computers may not be a good thing- this would just remove access to the tool from students who cannot afford a personal computer.

In addition, here are some specific examples:

Microsoft limits its Bing AI chats after it had some very unsettling conversations.

Is Google’s AI, LaMBDA, sentient? It’s convincing enough to persuade a Google engineer to speak out at the cost of his job. In some ways, the question isn’t whether it is sentient or not, but just how convincing it sounds. There are a lot of lonely people in the world who might talk to these AI systems. Consider the influence.

More than 1,000 technology leaders and researchers have urged artificial intelligence labs to pause the development of the most advanced systems, warning in an open letter that A.I. tools present “profound risks to society and humanity.” (see NY Times for more info)

A judge in Colombia has caused a stir by admitting he used the artificial intelligence tool ChatGPT when deciding whether an autistic child’s insurance should cover all of the costs of his medical treatment. (The Guardian)

In addition to text, we have to consider AI-generated images and deep fake videos.

Are A.I. Image Generators Violating Copyright Laws? AI image generators actually scrub the web for existing digital images and artwork to create their digital images. Also, there was a recent controversy when Lensa, an AI Avatar generator, created avatars that not only looked very similar to existing artists’ works but also created highly sexualized versions of the female avatars and in some cases lightened the skin compared to the images uploaded. One reporter tried Lensa and reported “Lensa’s terms of service instruct users to submit only appropriate content containing ‘no nudes’ and ‘no kids, adults only.’ And yet, many users—primarily women—have noticed that even when they upload modest photos, the app not only generates nudes but also ascribes cartoonishly sexualized features, like sultry poses and gigantic breasts, to their images. I, for example, received several fully nude results despite uploading only headshots. The sexualization was also often racialized: Nearly a dozen women of color told me that Lensa whitened their skin and anglicized their features.” This reporter also submitted childhood photos and received AI images that were sexualized. (Read more) I also think we should also be concerned about a spike in AI-generated images/videos getting circulated and falsely represented as reality. This is already a problem: technology has for a while allowed us to falsify images and video by doing things like swapping faces. However, making a convincing fake required some skill with photo and video editing tools. AI tools are readily available to anyone and as these tools get better, it is likely going to become increasingly difficult to determine what is real and what has been generated.

From The Verge: “Getty Images has filed a case against Stability AI, alleging that the company copied 12 million images to train its AI model ‘without permission … or compensation.’” At left you can see a Getty photograph and beside it, a fairly poor example of an AI-generated image that even has a version of the Getty watermark on it.

From the NY Times, can you tell which image was generated by AI?

Currently, there are few tools available for detecting AI-generated images. Google recently announced an invisible and permanent watermark on images that will identify them as computer-generated. The technology is called SynthID and it embeds the watermark into images created by Imagen, a Google image generator. The label should remain regardless of additional edits. However, Google acknowledges the tool is still developing. Google has also released a tool called “About this Image,” which provides basic info on images found on their site.

This article provides four ways to help identify AI-generated images:

- Check the image title, description, and comments section

- Look for a watermark

- Search for anomalies in the image such as blurred and distorted areas

- Use a GAN detector

Regarding video, check out this deep fake video:

I Am Not Morgan Freeman:

You can view more examples of deep fake videos HERE.

Although it is still early days with ChatGPT and similar AI tools, technology grows at an exponential rate and it’s important the academic and professional world stay current. AI-generated content has the potential to significantly impact the workforce. Microsoft is reportedly considering adding OpenAI’s ChatGPT technology to Microsoft 365, which is used extensively in both the academic and private sectors. (Read more)

Although AI can be useful in the private sector for things like data interpretation and coding, businesses and employers must worry about the legal implications of using AI, inaccurate information generated by AI, and the risk of confidential information accidentally being shared. ChatGPT could be effectively used as an aid to research, but unfortunately could easily be abused and research integrity is already a real problem (see RetractionWatch). Ethics is also a concern when using AI, which sometimes reinforces stereotypes. Consider the earlier examples of AI-generated videos and images, judges using AI to help determine cases, and of course academic integrity.

The reality is that it is here and not going to go away, so we need to prepare our students. Many, likely most, of our students will use AI in their careers, so we need to make sure they understand all these issues so that they can use AI in the most effective and ethical ways. We can also consider what kind of AI-related skills students might need for employment, such as how to write effective AI prompts (a position that can sometimes pay as much as $300k at the moment). Another concern that is unfortunately very real is that AI will continue to replace some jobs. We need to try to think about what jobs are most vulnerable to being replaced by AI and how to set our students up to be valuable in a world of AI. Finally, students (and all citizens) need to understand how to evaluate text and multimedia to determine what is real and what is fake.

NY Times: Can We No Longer Believe Anything We See?

One piece of good news is that in 2023 a US court in Washington, DC ruled that AI-generated content without human input cannot be copyrighted. This is likely to make companies wary of relying exclusively on AI and hopefully prevent some job losses to AI.

Academic Integrity

If you are trying to monitor for plagiarism it is important to know that the current tools for detecting AI-generated content are not reliable. Turnitin now has AI detection built into its Feedback Studio. However, in testing the results were all over the place: sometimes it correctly identified AI writing, sometimes not, and sometimes it tagged original writing as AI. OpenAI, the creator of ChatGPT, released a software tool to identify text generated by artificial intelligence but eventually pulled it because of low accuracy (see also). One study has found that AI detection tools falsely accuse international students of cheating. In addition to using detection tools, there are some things we can do now to try to address AI usage both in and out of the classroom:

- Stay informed about AI capabilities and limitations.

- Communicate with students about when it is appropriate to use AI as an aid and when it is not, as well as potential consequences (both academically and in the learning process). Discuss with students some real-world examples of AI use and consequences so students can personally connect with actions and consequences. From the Chronicle of Higher Education:

“The approach that most intrigued me is one that has to do with engaging students in a conversation about why and how they write, sometimes using these AI tools… writing is a form of thinking. Writing requires you to process and synthesize a range of facts and ideas, and to come up with a coherent and hopefully insightful take on what you have learned. Students, though, may have been trained in high school to see writing as a form of regurgitation based on a set of formulas (compare and contrast!). If you can explain to students the value of writing, and convince them that you are genuinely interested in their ideas, they are less likely to reach for the workaround.” - As instructors, we can watch for inconsistencies in student submissions. For example, is the quality of a paper submission significantly different from that of discussion posts? If you have concerns, you should meet with the student and ask pointed questions about the assignment content.

- Consider how AI is used in your field and the implications for your course(s). Are there times in your course when student learning can benefit from using the tool?

- Lean into authentic, personalized assessment whenever possible. Projects and presentations that require students to demonstrate their knowledge, especially if they require multiple methods (writing, images, video, charts, etc) are more difficult for students to cheat on. When possible, ask students to incorporate personal experiences, current events, and quotes from YOUR lecture material.

- When the course allows, spend some time discussing AI with your students, how they can detect AI-generated content themselves, and most importantly why it is essential that they take the time to do so rather than just assume that if the content looks authentic, it must be.

- This Inside HigherED article provides a number of useful suggestions from other instructors.

- If the nature of your course requires that you be as certain as possible that students are turning in authentic work at all times, then consider requiring all assignments be completed in-person, in the classroom, and/or use online or in-person proctoring.

Personalized Learning, Adaptability, Instant Feedback, Increased Engagement

Generative AI can benefit the classroom by:

- Increasing Efficiency: assist with assignment creation, such as generating more engaging discussion questions, quiz questions, rubrics, study aids, etc…

- Expanding Ideas: faculty and students can use generative AI to role-play or act as a debate partner, suggest ideas to improve lesson plans, elaborate on an outline, help students connect interests to fields of study, provide multiple explanations for complex concepts, present concepts in multiple modes, function as a study partner, and improve accessibility by generating alt text, captions, etc…

- Many studies have shown increased student outcomes when AI has been used in some form of tutoring.

Note that this statement was written by ChatGPT:

Welcome to [Course Name]! As we navigate the realm of technology and education, it’s important to establish guidelines for the responsible use of tools like ChatGPT within our academic context. ChatGPT, powered by artificial intelligence, can be a valuable resource for enhancing our learning experience. However, its use must align with ethical and academic standards. Below, you will find the acceptable and unacceptable uses of ChatGPT in this course:

Acceptable Use:

- Concept Exploration: You can use ChatGPT to help clarify and expand upon concepts discussed in class materials or assignments.

- Brainstorming: Feel free to utilize ChatGPT to generate ideas and brainstorm potential approaches for assignments or projects.

- Language Enhancement: Use ChatGPT to improve the clarity, coherence, and structure of your written communication, but ensure that the final work is your original creation.

- Grammar and Proofreading: ChatGPT can assist in identifying grammar and spelling errors in your work, but the responsibility for making corrections rests with you.

Unacceptable Use:

- Plagiarism: Do not use ChatGPT to directly copy or slightly modify text from sources without proper citation. Plagiarism undermines the principles of academic integrity.

- Submitting Unedited Output: Submitting responses generated by ChatGPT without substantial review, editing, and integration of your own knowledge and understanding is not acceptable.

- Assessment Cheating: Using ChatGPT to seek answers during quizzes, tests, or other assessments that are meant to reflect your individual understanding is considered cheating.

- Misrepresentation: Do not imply that the ideas or responses generated by ChatGPT are your own original work if you haven’t significantly contributed to or modified them.

- Automated Communication: Do not use ChatGPT to communicate with instructors or peers in a manner that simulates human interaction, as this can hinder genuine communication and collaboration.

Remember that our primary goal is to foster your learning and critical thinking abilities. When using ChatGPT, make sure to integrate its assistance into your learning process responsibly and ethically. If you have any questions about the appropriate use of ChatGPT or need clarification on its application, please don’t hesitate to reach out to me.

Let’s embark on this educational journey together, making the most of the tools at our disposal while upholding the values of academic honesty and integrity.

[Instructor Name] [Course Name] [Institution Name] [Date]

A quick Google search will return thousands of articles related to AI. Here are just a few resources to get you started:

- Inside Higher Ed: ChatGPT and Advice Academics Can Use Now

- Chat GPT: Friend or Foe?

- Emeritus.org: What is ChatGPT

- AP News: What is ChatGPT and Why Are Schools Blocking It?

- NPR: A College Student Created an App That Can Tell Whether AI Wrote an Essay

- Turnitin Resources Related to AI

- 5 experts explore how AI will affect the working lives of artists and knowledge workers

- ChatGPT Is Everywhere: Love It or Hate It, Academics Can’t Ignore the Already Pervasive Technology